Original link: https://aipulse.one/ai-pulse-weekpost7/

Header image generated by Midjourney

prompt:a rainy day in Tokyo, city centre, no texts,crowds and many cars, reflections in the puddles --ar 16:9 --v 5.1 --style raw

Google I/O 23 AI column

The current Google I/O 2023 has come to an end, and the theme this time is “Making AI helpful for everyone” (making artificial intelligence helpful for everyone). At this Google I/O, Google mentioned the word “AI” nearly two hundred times. It is not difficult to see that Google wants to prove its AI achievements urgently. As it turns out, Google did make some efforts, too.

The following are some conclusions brought by Marvin (originally published on Newlearner Channel):

– Pichai emphasized that Google has been working on artificial intelligence for a long time and wants to bring generative AI to Google products

– “Gemini” (Gemini) is Google DeepMind’s next generation model with multimodal capabilities

– PaLM 2 is faster, understands language better, and will support 25 Google products, including Google Bard

– Google will add metadata and watermarks to all images generated by its artificial intelligence and display tags in search

– Google Bard drops waitlists in 180 countries, adds dark theme, Gmail and document export

– Generative AI is coming to Google Search and will appear at the top of the page as an “AI-powered snapshot” with a varying light blue background. Google will list follow-up questions, start a new dialogue mode, and currently apply for Waitlist

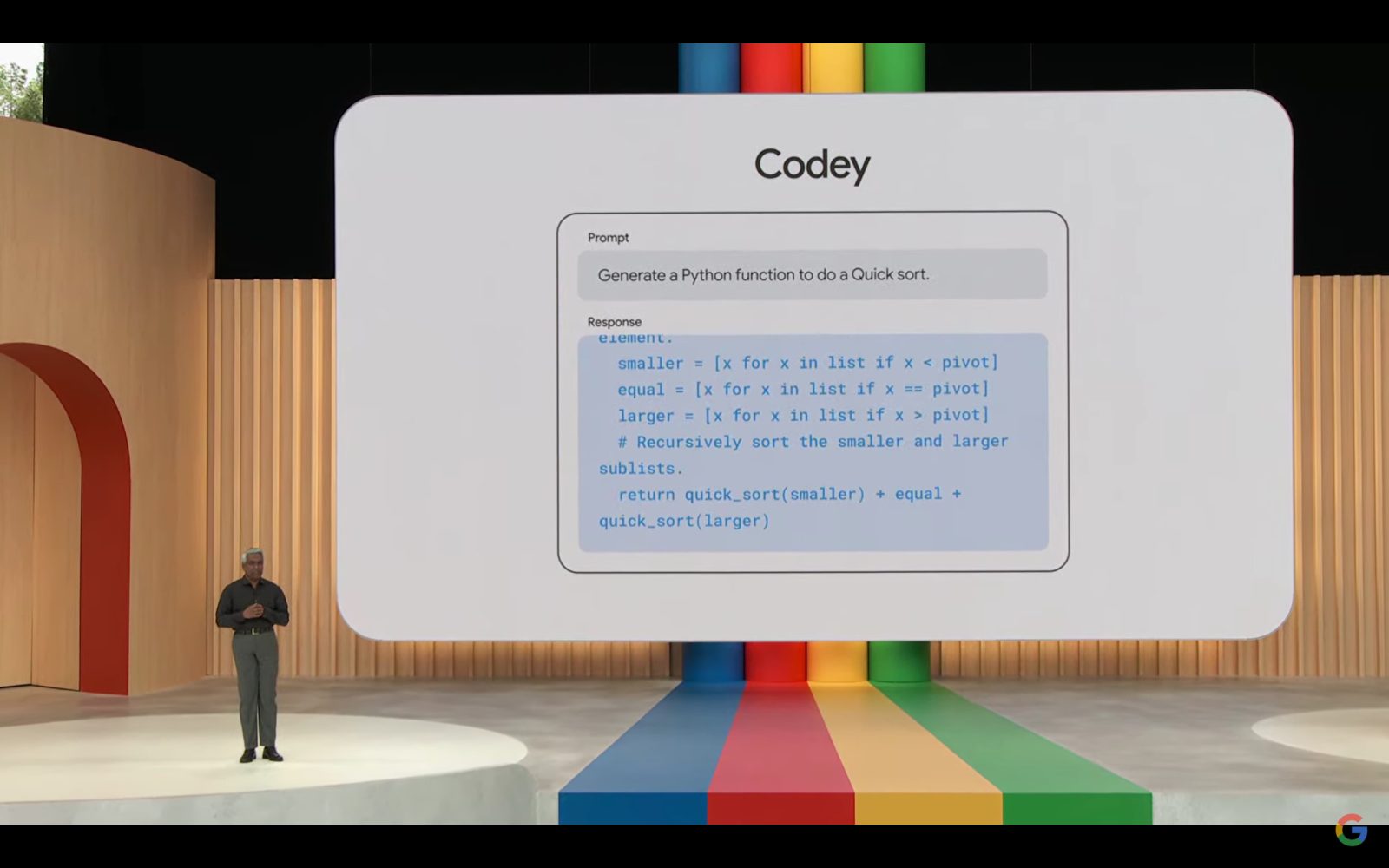

– Google launches “ Codey ,” a generative AI model focused on programming

– Google’s “Tailwind Project” is an artificial intelligence notebook to help with learning, etc., which can extract information from files uploaded or owned in Google Drive, and the Waitlist test is currently open

– Google Labs has Google’s latest exploration of AI, and those who are interested in AI can continue to pay attention

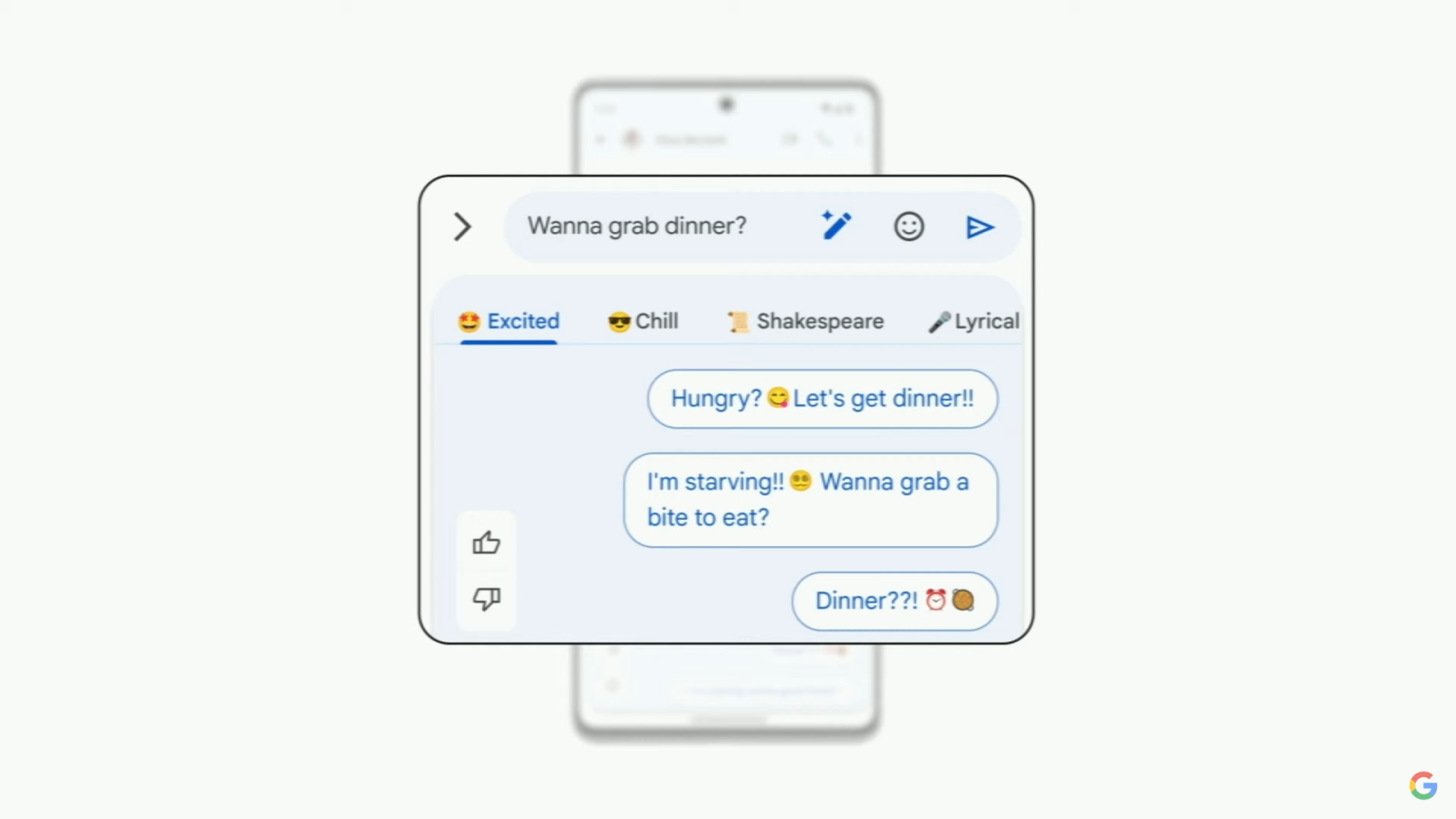

– Google Messages to launch AI-powered “Magic Compose”

Recent Technology News

1. Anthropic Corporation publishes the Claude Constitution

Claude’s Constitution is an artificial intelligence system developed by Anthropic that guides the behavior of models through a set of principles. These principles are intended to help avoid toxic or discriminatory outputs, avoid helping humans engage in illegal or immoral activities, and broadly create an AI system that is beneficial, honest, and harmless. They decided to train their Claude using Constitutional AI, a system that uses “a set of principles to judge output” that helps Claude “avoid toxic or discriminatory output,” such as helping humans engage in illegal or unethical activity. According to Anthropic’s blog posting this week, this allows it to broadly create an AI system that is “beneficial, honest and harmless.”

Unlike traditional AI chatbots that rely on human feedback for training, AI models trained with Constitutional AI are first taught to critique and revise their own responses based on a set of Constitutional AI principles. This approach is called “constitutional AI” because it uses a set of principles to make judgments about the output.

2. OpenAI releases Shap-E, a text-to-3D model

OpenAI released the latest artificial intelligence model Shap·E. Shap·E is a text-based 3D model generator that allows users to input text and generate realistic and diverse 3D models.

Shap·E can not only generate 3D models, but also generate parameters of implicit functions, and can render textured meshes and neural radiation fields. This means that Shap·E can create high quality 3D models

Neural radiance field is a new view synthesis technique with implicit scene representation, which has received extensive attention in the field of computer vision. It is a new view synthesis and 3D reconstruction method, which has a wide range of applications in robotics, city maps, autonomous driving, virtual reality/augmented reality, etc. With the ability to generate implicit function parameters, Shap·E can render neural radiation fields, allowing users to create complex 3D models with high-quality textures.

Shap·E has a wide range of applications, from creating detailed models for video games, movies and virtual reality, to scientific research and engineering simulations, which will have great application prospects. With its ability to generate complex 3D models with detailed textures, Shap·E is a revolution in the world of 3D modeling and rendering.

But as far as Steven’s personal experience is concerned, Shap-E still has a lot of room for improvement. At present, it seems that the effect of Shap-E is very rough, and only a rough outline can be seen. But since Dall-e can grow from nothing within a year, we should also give Shap-E some patience to look forward to future performance.

Project address: openai/shap-e: Generate 3D objects conditioned on text or images (github.com)

3. OpenAI and Ng Enda launched the ChatGPT prompt course for developers

The course jointly launched by OpenAI and Wu Enda is the ChatGPT Prompt Engineering Course. The course jointly launched by OpenAI and Wu Enda is the ChatGPT Prompt Engineering Course. Chatbot, and OpenAI-based API to practice Prompt engineering skills.

This course is free and takes about an hour and a half in total.

Course official website: ChatGPT Prompt Engineering for Developers – DeepLearning.AI

Of course, some people have already moved it to bilibili, so I won’t provide a link here, you can search by yourself

AI tool recommendation

InstaSpeak AI: AI English Speaking Coach

InstaSpeak AI is an AI spoken English teacher that can help users improve their spoken English fluency. Users can open the official website https://app.insta-speak.com , follow the sentences provided by AI, and speak into the microphone for one minute. AI will analyze the user’s pronunciation, point out problems such as user vocabulary, intervals, and pauses, and propose vocabulary suggestion. Compared with ordinary AI speaking apps that focus on pronunciation, InstaSpeak focuses on the fluency of sentences.

This article is transferred from: https://aipulse.one/ai-pulse-weekpost7/

This site is only for collection, and the copyright belongs to the original author.