Original link: https://selfboot.cn/2023/10/08/chatgpt_see/

On September 25, OpenAI announced ChatGPT’s new capabilities: ChatGPT can now see, hear, and speak . ChatGPT finally has “eyes” and can understand pictures.

During the National Day, I was impressed by Grayscale’s ability to understand images. After experiencing it, it was still amazing. Next, we will take you to experience the image capabilities of ChatGPT in depth from the following dimensions.

- Object recognition: Check whether the model can accurately identify the main objects or elements in the image.

- Situational interpretation: Determine whether the model can infer the story or situation behind the image based on the content.

- Detail Capture: Checks whether the model is able to notice and describe tiny details or hard-to-notice parts of the image.

- Emotion Interpretation: Evaluate whether the model is able to capture human emotions in images based on their content.

- Image comparison: Check whether the model can compare and contrast when multiple images are provided.

object recognition

Let’s first take a look at ChatGPT’s object recognition capabilities. When I went out to play on the National Day, I saw some food ornaments made of jade that were quite realistic. I brought them to ChatGPT to recognize. The prompt words are as follows:

What foods are in this picture?

ChatGPT’s answer was pretty good and the following foods were identified. Some of the answers were not very precise, but they exceeded my expectations. The following is ChatGPT’s answer. Actually, no chicken feet are seen here, so I don’t know what it recognized as chicken feet. Also, I recognized the poached eggs as pig ears, which is a bit funny~

- white buns (in yellow basket)

- Lotus root slices (round slices with holes)

- Eggs (look like hard-boiled and cut in half)

- red dates

- chicken feet

- Food that looks like pig ears (lower left corner)

- Some kind of candy or dried fruit (on the middle plate)

- Slices of meat of some kind (maybe beef or lamb, served in a bowl)

- Yellow food that looks like chicken or other poultry

- White round glutinous rice balls

- A yellow food in the shape of a chicken, possibly made from molds or pastries

- Long green vegetables (perhaps green beans or other similar vegetables)

There was also an image of various ancient books made of jade, which ChatGPT identified as possibly showing replicas or works of art rather than actual ancient books. I can also recognize the names of some of the books, but I still have hallucinations and am given some books that are not in the pictures.

situational explanation

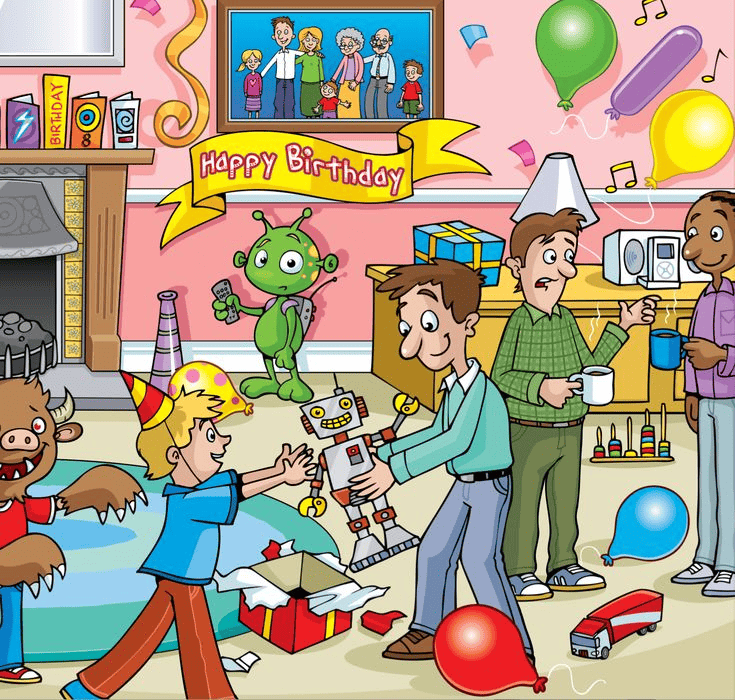

When I was young, there were often questions about picture writing. I was given a picture and asked to write a paragraph describing the story or situation in the picture. Let’s take a look at the capabilities of ChatGPT. Here is an illustration found on the Internet. The prompt words are as follows:

Can you help describe the scene in this picture?

Then directly ask ChatGPT to describe the scene in this picture. ChatGPT’s answer is still amazing. In summary, this picture shows a birthday party scene full of fun and laughter, and also gives a detailed description:

- In the center of the room is a little blond boy playing with a robot toy with an adult. They appear to be interacting, with the little boy reaching out to the robot.

- On the left side of the room, there is a little brown monster wearing a birthday hat who seems to be smiling.

- A green alien stands next to an open gift box, holding a remote control as if controlling a robot.

- There are two adults on the right side of the room. A man in a green shirt is pouring a drink to another man holding a cup.

- A streamer that says “Happy Birthday” hangs on the wall behind, and there’s a TV screen showing pictures of five people.

- There are many toys scattered on the ground, such as red cars, organs and balloons.

- There is a shelf with musical equipment against the wall, and notes waft out of the equipment, indicating that music is playing.

- Other decorations and items such as lanterns, candlesticks and birthday cakes are also scattered around the room.

But the embarrassing thing is that the photo in the photo frame on the wall is recognized as a TV, and the number of people in it is not very accurate .

Detail capture

In fact, it can be seen from the above example that ChatGPT has a strong ability to capture details. It noticed the toy cars, balloons, etc. scattered on the ground. We can also continue to ask for some details, such as “What is the black thing in the upper middle of the left side of the picture?”. ChatGPT believes that the black object in the upper middle on the left side of the picture is a fireplace. Judging from its structure and position, it should be a fixed decorative or architectural element of the room.

Next, it continued to ask how many people were in the photo frame on the wall. This time ChatGPT overturned. It first said 5 people, then asked it to count again, and said it was 6. Finally, it asked it to ” look carefully “, and still answered wrongly: in the photo. There were five people, two adults and three children.

Emotion interpretation

A human child less than 1 year old can understand the expressions and emotions of adults, so how does ChatGPT perform at understanding expressions? For the convenience of testing, I directly took a photo with many expressions for testing. The prompt words are as follows:

There are many facial expressions in this picture. What emotions are they? You can describe your emotions to me one by one.

ChatGPT started directly from the upper left corner and described it in order from left to right and top to bottom, but it gave me 17 expression descriptions at the beginning, and then told him that there were 15 in total and let it regenerate the description. In order to correspond to the picture, here I output a table corresponding to the position of the expression above.

| 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|

| thinking or confused | surprise | sad | think | Expressive or indifferent |

| Smile | frightened | happy | surprise | deep thought |

| displeasure or frown | laughing out loud | naughty or joking | Serious or expressionless | happy or delighted |

Picture comparison

Everyone has played some games that find the differences between the left and right pictures. This is quite difficult for people, and sometimes they just can’t find the difference. So how is the performance of ChatGPT? I found a picture on the Internet and gave it a try. The prompt words are as follows:

Find the differences between the left and right parts of this picture and describe them one by one.

ChatGPT’s answer has a serious illusion . It believes that the left and right parts are different:

- The first obvious difference is that the lollipop in the middle of the left section is rainbow colored, while the lollipop in the middle of the right section is simpler in color.

- In the upper left corner of the two parts, there is a chocolate ice cream. The chocolate ice cream on the left has more white dots in it, while the chocolate ice cream on the right has less white dots.

- The left part of the rainbow lollipop has a small candy on the right side, but the right part does not have this small candy.

It can also be seen that there is a rainbow-colored lollipop in the middle of the left part. Although the colors on the left and right are actually the same, ChatGPT believes that the color on the right is simpler. The other two different points are also wrong. It seems that ChatGPT is still relatively poor in this type of tasks.

Summarize

Through the previous experience, we can see that ChatGPT’s ability in image understanding is still very good, and it shows amazing potential in object recognition, situation interpretation, etc. The visual capabilities of ChatGPT have just been opened, and there is still a lot of room for improvement. We have reason to believe that with the enrichment of training data and the iterative upgrade of models, ChatGPT will be able to truly “ see clearly ” in the future.

Before the advent of visual capabilities, in order for ChatGPT to generate some front-end code, you had to go to great lengths to describe to it what the page looked like. Then you would just throw it the design drawing or random sketch and wait for it to implement the code.

This article is reproduced from: https://selfboot.cn/2023/10/08/chatgpt_see/

This site is for inclusion only, and the copyright belongs to the original author.