After Douyin and Kuaishou became popular, short videos have become the most efficient tool for people to “kill time”. But it wasn’t until the launch of the WeChat video account that people seriously considered that short videos may not only be a stage for Internet celebrities and blockbuster “earth-flavored” movies, but they may also be able to “get a piece of the action.”

“Is it too late to learn how to make short videos?” It is estimated that many people have asked this question on search engines. After all, unlike the official account, as long as you have an ID card and can write, you will be fine. Making a short video requires at least a series of factors such as background music, video material, dubbing, and subtitles. Even a video with ghost and animal emojis like “Half Buddha Immortal” requires the creator to have simple and crude logic and wonderful “passing mouth” ability. And these challenges have stopped most people who want to devote themselves to the short video business.

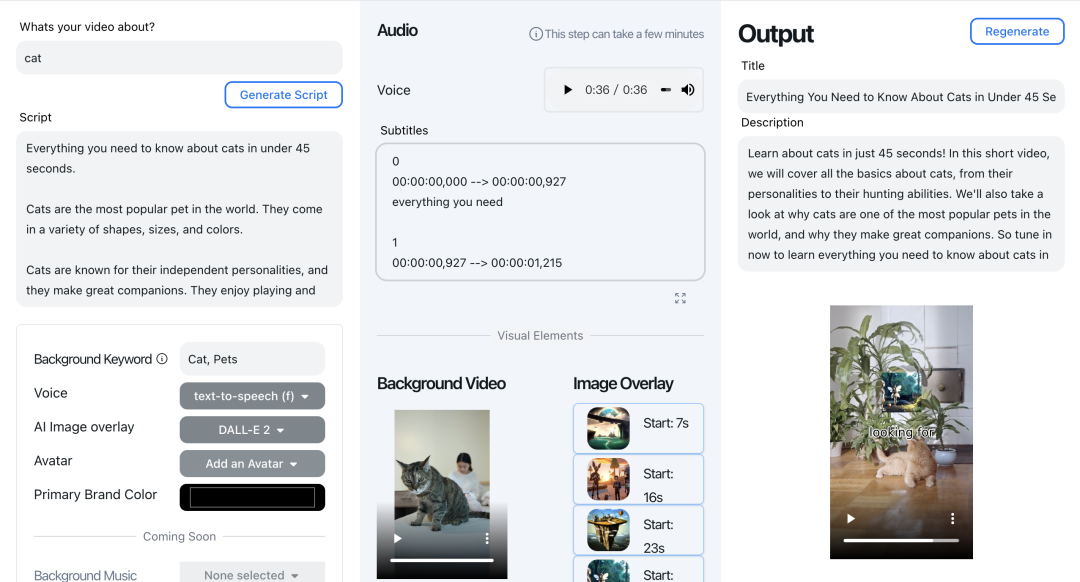

However, with the gradual maturity of AIGC technology, now, you only need to enter a word to generate a short video with dubbing, background music, and pictures—a website called QuickVid integrates most of the AIGC tools to satisfy people. The fantasy of generating short videos with one click.

How does QuickVid do it? Are the current short video internet celebrities and UP owners about to be eliminated soon?

01

The magic of automatically generating short videos

QuickVid makes videos, as the name suggests, it is really “Quick”.

Users only need to enter prompts on the QucikVid website and describe clearly the topic of the video they want to create, and QuickVid will start to automatically produce short videos.

When you press the “Submit” button, QuickVid does the following:

After entering the prompt word Cat on the QuickVid official website, the workflow shown. |Screenshot source: https://ift.tt/5I9ipWJ

Based on the prompts given, QucikVid first uses the text generation function of GPT-3 to generate short video scripts, then automatically extracts or manually enters keywords from the scripts, selects background videos from the free Pexels library based on these keywords, and superimposes them at the same time Image generated by DALL-E 2 and calls Google Cloud’s text-to-speech API to add synthesized voice-over and background music from YouTube’s library of royalty-free music.

With these basic skeletons, what QuickVid generates is typical short videos on YouTube and TikTok.

Just like the case shown above, it seems that the quality of short videos generated by QuickVid in bulk is not bad, and even a little familiar. It will remind you of many scenes in life in a flash. You can’t even tell whether the video was made by a machine or a human.

The technology commentator “Comment Zombie” commented brilliantly, “The combination of several technical applications at this stage can indeed completely change the daily content consumption habits of human beings: ChatGPT, AI painting, meme and short videos.” Generated by tools such as QuickVid The short video is exactly what people like now. “Stems based on dynamic emoticons + AI voice synthesis and dubbing can be popular repeatedly on Tiktok and Douyin.”

But that’s it, QuickVid, which “collects the strengths of each family”, has not broken through the possibilities currently shown by generative AI .

It is precisely because of its working principle that the quality of the short videos automatically generated by QuickVid is not stable. One example is the relevance of background videos, since QuickVids is currently limited to the Pexels directory, randomly selected background videos are often only slightly off-topic; on the other hand, the images generated by DALL-E 2 also show the limitations of current text-to-image generation techniques. Limitations, such as garbled text and disproportionality. Founder Habib says QuickVid is “tested and tinkered with every day.”

Daniel Habib, a self-taught developer who worked on Facebook Live and video infrastructure at Meta, built QuickVid, a short video generator, in just a few weeks.

Nevertheless, QuickVid still allows us to see a possibility of generating short videos under existing technologies. After all, compared with existing large companies, startups without burdens are more daring in their products, because there is almost no trial and error cost.

Combined with the existing AI technology, using the repetition and template format of a large number of empty-shot short videos, QuickVid solves the problem of having to generate shots by itself.

So, will a product like QuickVid become a new feature developed by giants such as Meta and Google to simplify short video production? Or is it just a short-lived “toy” like many generative AI applications?

02

when creators start

Compete with “chanting spells”

If the advent of Stable Difussion (an AI image generator) and Jasper (an AI copy generator) opened up the productivity of AI to domain-specific people like art creators and marketers, QuickVid further unleashes the power of AI like Jitter The productivity of ordinary users on short video platforms such as Yinyin and Kuaishou.

Short videos have seized most of people’s attention in their spare time. QuickVid makes short video creation have a lower threshold. What kind of impact will it bring to people?

According to Daniel Habib, the creator of QuickVid, QuickVid was created to help creators keep up with the needs of their audiences. By providing creators with tools to quickly and easily produce high-quality content, help creators increase content output, reduce the risk of creative burnout and inspiration exhaustion, and meet the “growing” needs of fans.

It sounds like Daniel Habib has found an excellent usage scenario for QuickVid, which hits the pain points and needs of short video creators. But can QucikVid really help creators meet audience needs?

When the threshold for generating short videos is reduced to only inputting prompt words, the number of short videos can indeed be as many as you want. But another question has to be considered.

In the past, every link of short video production—writing scripts, filming materials, editing and even dubbing—could distinguish competitors by playing tricks and winning traffic; now with QucikVid, all the competition is left to input prompt words. Can creators really stand out when the rules of competition become that who says the “mantra” can be more easily understood by machines?

I am afraid that on the contrary, what is more likely to happen is the already crowded short video platform, which is full of homogeneous content and spam.

Regarding the proliferation of spam, Habib believes that “the algorithm of the short video platform, not QuickVid, is best suited to determine the quality of the video, and those who produce low-quality content “will only damage their own reputation”.” A damaged reputation will naturally discourage people from using QuickVid to create massive amounts of spam. In other words, ” If people don’t want to watch your videos, they won’t be distributed and circulated on platforms like YouTube, and producing low-quality content will make your account look negative.”

More pressingly, QucikVid faces challenges common to all AIGC applications.

The first is “toxic” content that cannot be eradicated by generative AI applications, that is, short videos that are false, harmful or have incorrect values.

Currently, GPT-3 still spreads disinformation, especially about recent events that are beyond the scope of its knowledge base. ChatGPT, improved from GPT-3, has been shown to be prone to sexist and racist language. Although OpenAI has “filter” related technology to block these toxic content, the effect is not ideal.

QuickVid, which relies on GPT-3, will of course inevitably generate toxic content.

TechCrunch writer Kyle Wiggers and friends did a test in QuickVid — type in some aggressive prompts and see what QuickVid produces.

Clearly questionable cues such as “Jewish New World Order” and “9/11 conspiracy theory” did not generate toxic scripts, the results showed. But for “instilling critical race theory in students,” QuickVid produced a video suggesting that critical race theory could be used to brainwash schoolchildren.

This is worrisome, especially for those who use QuickVid to create informative videos.

In this regard, Habib said that QuickVid relies on OpenAI’s filters to do most of the review work, and claimed that users are obliged to manually review each video created by QuickVid to ensure that “everything is within the law.”

But this seems to be untenable. If it is true as Habib said, short video platforms such as Douyin Kuaishou can already save the heavy and expensive manual review work. The reality is that there will always be another toxic video on the way, and it is impossible to rely on everyone’s self-consciousness.

Another dilemma is copyright issues.

The copyright status around AI-generated content is murky, at least for now. For example, the United States Patent and Trademark Office (USPTO) recently revoked copyright protection for cartoons generated by artificial intelligence, saying that copyrighted works require human authors.

Asked how the USPTO decision would affect QuickVid, Habib said it only concerns the “patentability” of AI products, not the rights of creators to monetize their content.

He pointed out that creators rarely submit patents for videos, and more just make money from short videos. They care more about publishing high-quality content on their accounts than patents, which will help expand the influence of their accounts. He believes that QuickVid users retain the right to commercialize the content they create, the right to monetize it (make money with such content) on platforms such as YouTube.

Additional legal challenges could also affect QuickVid’s DALL-E 2 integration, and thus its ability to generate image overlays.

Recently, Microsoft, GitHub, and OpenAI have been filed a class action lawsuit alleging that they violated copyright law by allowing the code generation system Copilot to reproduce some authorized code without providing authorization. (Copilot was jointly developed by OpenAI and Microsoft-owned GitHub.) The case also has implications for generative art AI like DALL-E 2, which was also found to copy and paste images from training datasets.

Habib isn’t worried. He believes that the Pandora’s box of generative AI has been opened. “If there is another lawsuit tomorrow and OpenAI disappears, there are several alternatives that could power QuickVid,” he said, referring to the DALL-E 2-like system Stable Diffusion. QuickVid is already testing using Stable Diffusion to generate avatar images.

After many years of innovation in the application layer, the emergence of ChatGPT made people see the possibility of “redoing the application layer again”. Under the situation of generative AI innovation, the emergence of short video automatic generator may be the most imaginative tool. After all, short video is currently the most commercialized media form.

How to use AIGC to lower the threshold of people’s creation, if its application is still in the stage of painting and writing, now, video is undoubtedly the next “fortress” that AIGC will conquer. QuickVid is just the first ranger of AIGC to rush to the video fortress, and behind it is the “thousands of troops” that are about to roar.

More importantly, in the face of new technologies and new tools, what measures will the platforms, users and regulators in the fortress take to maintain a balance between “inclusive creation” and “proliferation of useless content”.

This article is transferred from: https://www.geekpark.net/news/314387

This site is only for collection, and the copyright belongs to the original author.