Welcome to the WeChat subscription number of “Sina Technology”: techsina

Source: Yuanchuan Research Institute

On August 10, a Xiaopeng P7 in an assisted driving state collided with a car parked in the expressway, killing one person on the spot.

According to the official statement, at the time of the incident, a road maintenance vehicle was parked on the leftmost side of the elevated elevated (an illegal operation). It is suspected that a P7 crashed straight ahead without slowing down while the staff were working at the rear of the vehicle. vehicle, causing the operator to be knocked out.

Xiaopeng is recognized as one of the companies with the highest level of autonomous driving in China.

In fact, for the current assisted driving system, it is definitely a hell-level problem to accurately identify an irregular stationary object and make a correct response in a timely manner, which is why similar accidents have emerged in an endless stream in the past two years.

On August 8, an Ideal ONE collided with a highway engineering vehicle when NOA (Navigation Assisted Driving) was turned on. In August last year, a Weilai ES8 also crashed into a working construction vehicle in the assisted driving state, and the owner died unfortunately. Tesla has been involved in more fatal accidents in similar scenarios.

Static objects on the highway have almost become a fatal scene for assisted driving.

01

Camera + millimeter wave radar = frog eye?

All current models with assisted driving functions will be equipped with AEB (Automatic Emergency Braking) function as standard to prevent rear-end collisions and reduce collisions.

But the problem is that the conditions for AEB to take effect are relatively strict – it is easy to fail to deal with plugging, it is easy to fail to stationary objects, and it may fail if the vehicle speed exceeds a certain range (usually 60km/h-80km/h).

Why is assisted driving so hypocritical? It is generally believed that the weakness of the perceptual system is responsible for the cauldron.

The hardware specifications of the Xiaopeng P7 involved in the incident on August 10 are not high so far. The XPILOT 2.5 system is equipped with 5 cameras and 3 millimeter-wave radars. The power is 30TOPS (the industry generally believes that L3 and above autonomous driving requires hundreds to thousands of T computing power), and the main sensors responsible for high-speed scenarios are a millimeter-wave radar and a monocular camera.

At the time of the incident, the two sensors failed to identify the parked work vehicle in time due to their respective limitations.

Li Xiang, the founder of Lixiang Auto, once said: “The combination of camera and millimeter-wave radar is like the eyes of a frog. It is good at judging dynamic objects, but it is almost incapable of non-standard static objects.”

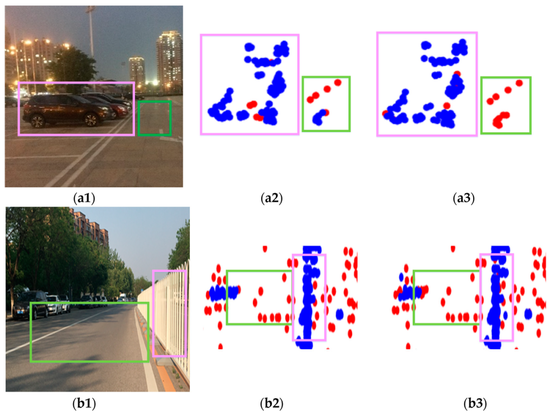

Among them, millimeter-wave radar can measure the distance to the object in front, but cannot detect the height of the object, and the information density is low. If you trust it too much, it will cause the assisted driving to be “weapons and trees” and “ghost brakes” to occur frequently.

To reduce the false trigger rate, today’s mmWave radars are often set to automatically filter stationary targets at high speeds. Therefore, even if the millimeter-wave radar finds a stationary object in front, it will either turn a blind eye or it will be difficult to respond in time.

The right is the point cloud detected by traditional millimeter-wave radar. It is unrealistic to rely on such data for accurate identification.

The right is the point cloud detected by traditional millimeter-wave radar. It is unrealistic to rely on such data for accurate identification.Monocular cameras are difficult to measure accurately, and their perception of the environment relies on deep learning-driven visual recognition, which requires large-scale data training. If there is not enough training data for a scene or object, the camera may treat it as the object does not exist, or misidentify it as a background such as road or sky.

In 2016, 2020, and 2021, three Teslas crashed into white trucks on the road after turning on Autopilot. Investigations into the cause of the accident all point to the camera: it recognizes the white body of the truck as the sky[1].

In China, work vehicles with different shapes have become a common nightmare for new forces. In the accident involving Xiaopeng P7, the crashed vehicle was an ordinary car, and the personnel stood behind the car at the time of the incident, which made it even more difficult to identify – the camera can identify humans and vehicles separately, but the two are stacked together. , the feature interferes, and it becomes a completely new object for the camera.

As a human, this is absurd, but in fact, similar cases are not uncommon – an advertisement portrait posted on a car will be recognized by the assisted driving system as a real person running at a speed of 60 kilometers per hour.

In addition, visual recognition algorithms usually need to run for a period of time to obtain results, which can take hundreds of milliseconds [2]. In the face of stationary objects, the recognition time may be further extended to more than 2-4 seconds. At a speed of 80km/h, the vehicle can travel 44-88 meters at this time, and the fault tolerance rate of the system is further reduced [3].

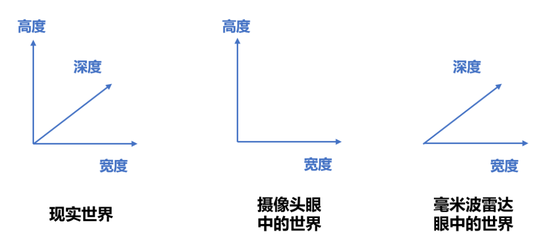

To a certain extent, most of the existing assisted driving relies on two “two-dimensional creatures” of monocular camera and traditional millimeter-wave radar to understand the world – the camera cannot see the depth, and the millimeter-wave radar cannot measure the height. But the problem is that reality is complex three-dimensional, and assisted driving requires human beings, the intelligent three-dimensional creatures, to get to the bottom of it.

Regrettably, there are often overly optimistic drivers who try to force the assisted driving system to complete the “strengthening strike” and use it as an unmanned system.

02

Soft and hard upgrade, everything will be fine?

In the past two or three years, car companies have stimulated the nerves of users with dozens to hundreds of terabytes of large computing power chips and thirty or even forty sensors.

However, few users realize that doubling the computing power and the number of sensors does not mean doubling the assisted driving ability. In the future, the sensors that really come in handy are often only a few.

In the collision accident of NIO ES8 in 2021, the vehicle is equipped with 24 sensors, including a trinocular camera, 4 surround view cameras, 5 millimeter-wave radars and 12 ultrasonic radars. Although the configuration is armed to the teeth, when driving at high speed, only the trinocular camera and a forward millimeter-wave radar can effectively detect the front.

In theory, multi-eye cameras can form stereo vision through parallax and obtain three-dimensional perception capabilities. However, due to limitations such as computing power requirements, reliability, and perception distance, NIO ES8’s trinocular cameras do not use stereo vision algorithms. There are three Superposition of monocular (similar to telephoto, mid-focus, and wide-angle of mobile phones).

In essence, this is no different from mainstream assisted driving hardware.

Therefore, the focus of Tesla and new forces this year’s new products is not simply to stack the number of sensors, but to improve the quality of sensors, improve sensor fusion, and upgrade software algorithms, so that smart cars can “live in three-dimensional space”. The form of assisted driving tempers the ability of automatic driving.

Tesla chose a purely visual route, reconstructed the algorithm framework, used AI-powered cameras to measure distances, and used deep learning algorithms to convert two-dimensional images collected by multiple high-definition cameras into bird’s-eye three-dimensional images.

However, this path still relies on a large amount of data training. The beta version of FSD (Full Self-Driving, which Tesla calls a fully autonomous driving system, but is identified as assisted driving by US regulators) that Tesla first pushed to a small number of car owners, its There is still a clear gap between the driving level and human beings, and it is not uncommon for people to not recognize the road and hit the side pillars.

Tesla FSD bravely hits the side pillar, the master can’t save the car

Tesla FSD bravely hits the side pillar, the master can’t save the carCompared with Tesla, which is a slanted sword, other companies tend to adopt the route of cameras, millimeter-wave radar, and lidar multi-sensors.

Among them, high-definition cameras have been widely deployed in domestic L2-level assisted driving systems because they help to improve the recognition ability.

In the field of millimeter-wave radar, in order to cope with the problem of low information quality of traditional millimeter-wave radars, companies such as Bosch, Continental, Huawei, and Aoku have developed 4D millimeter-wave radars with three-dimensional perception capabilities. However, the maturity of this technology is not high, and it has not yet been mass-produced. In addition, its resolution is at a disadvantage compared to cameras and lidars.

In this context, lidar has become the most popular smart driving sensor this year.

Lidar measures the distance to an object by emitting pulsed laser light and detecting reflected signals, and establishes a three-dimensional model of the surrounding environment. Lidars with high beams not only have the advantages of long detection distance and accurate ranging, but also have higher angular resolution. These characteristics make it difficult for lidar to turn a blind eye to large stationary objects lying across the road.

The second-generation platform models of Wei Xiaoli and high-end models such as Changan Avita and SAIC Zhiji are equipped with lidars as an important means to upgrade assisted driving.

Weilai ET7 lidar perception effect

Weilai ET7 lidar perception effectIt’s just that lidar is not omnipotent, and its detection ability in strong light and rainy environments will be affected. In addition, due to the small number of data samples, the recognition algorithm of lidar is still not mature enough.

In a multi-sensor smart car perception architecture, how to integrate and trust the signals of cameras, millimeter-wave radars, and lidars is still a problem to be solved.

The above series of configurations are only to solve the perception problem in autonomous driving. After that, there are still technical problems in planning, control, and execution to be solved.

This may mean that, for quite some time, the car owner has to use assisted driving with complex emotions – on the one hand, as the owner, he enjoys the lightening of the driving task; on the other hand, he has to carefully monitor the An immature system prevents its downtime from causing irreversible consequences.

03

User education is harder than technology upgrades

A few years ago, when the hot money in the autonomous driving industry was surging, practitioners were keen to discuss the problem of futuristic trams such as “protecting the car owner or the road protector in the event of a driverless accident”.

But now the facts have proved that it is more urgent to let the people learn how to use assisted driving correctly. In the first half of this year, the penetration rate of domestic L2-level assisted driving has reached 30% [4].

As mentioned above, the requirements of assisted driving on the driver’s attitude are actually full of philosophical speculation: if you don’t believe it, it is meaningless, and if you believe too much, it may cost your life.

In 2017, Google’s self-driving company, Waymo, found that human nature would eventually lose to this test: when its employees were familiar with the functions when testing the assisted driving system, they would doze off, put on makeup, and play with mobile phones at a speed of 90 kilometers per hour. Simply unable to take over [5].

To this end, Waymo urgently stopped the research and development of assisted driving and made every effort to develop unmanned vehicles [5]. Although from a business perspective today, Waymo has been ridiculed for this decision, it is difficult to criticize its choice from an ethical or risk-averse perspective.

When Waymo’s self-driving cars are still accumulating data in Phoenix, Arizona, some car companies choose to first turn assisted driving into autonomous driving or even driverless cars, and then cash in in installments. In practice, it is not uncommon for users to use or even show off assisted driving intentionally or unintentionally as automatic driving.

User demo: How to use an orange to deceive a brand of assisted driving steering wheel detection

User demo: How to use an orange to deceive a brand of assisted driving steering wheel detectionThis triggers the theater effect of assisted driving – when a person chooses to stand up to watch the show, the person behind cannot sit.

When a car company can promise to “achieve autonomous driving this year” every year without paying any price, if other car companies do not follow up, they can only take over the unfair competition of their opponents.

Xiaopeng is a typical one among many car companies: on the one hand, it works hard and promotes “full-stack self-development” as its core competitiveness; The vehicle is equipped with DMS (full name Driver Monitor System, which can remind the driver or exit the assisted driving when the driver is tired or distracted) to guide the car owner to use the function correctly.

However, due to considerations of competitive strategy, cost, user privacy, experience, etc., most car companies, including Xiaopeng, have soft constraints on drivers’ abuse/misuse of assisted driving, and DMS mainly provides sound or vibration warnings , and can be turned off. In other words, it is mainly up to the driver to be conscious.

Xiaopeng P7 DMS camera

Xiaopeng P7 DMS cameraPolicies and regulations are trying to curb this phenomenon. The EU E-NCAP requires that all new cars must be mandatory to install DMS from July this year. In China, since the policy in 2018 required the mandatory installation of DMS on commercial vehicles with “two passengers and one danger”, the provision of standard DMS for passenger cars is also reportedly under study [6].

No matter whether this regulation can be implemented or not, every driver should look straight ahead when using assisted driving, hold the steering wheel, and be ready to respond to emergencies at any time. After all, no matter how advanced assisted driving is, it is only “assisted” driving.

(Disclaimer: This article only represents the author’s point of view and does not represent the position of Sina.com.)

This article is reproduced from: http://finance.sina.com.cn/tech/csj/2022-08-16/doc-imizmscv6488924.shtml

This site is for inclusion only, and the copyright belongs to the original author.